This year, the annual KMWorld Conference took place from November 16–19 as a virtual event under the title “KMWorld Connect 2020.” It included the co-hosted conferences Enterprise Search & Discovery, Taxonomy Boot Camp, and Text Analytics Forum. I was privileged to join the conference for the first time.

Organizing one of the largest knowledge management conferences online must have been an endeavor. The web conferencing platform, PheedLoop, allowed participants to attend sessions from the four conferences as they happened and to chat and answer attendees’ questions at virtual booths. Audience questions appeared in real-time during presentations, and presenters were able to answer these questions at the end of the talk.

Obviously, one of the major shortcomings of online conferences is the lack of live face-to-face communication. Despite the virtual nature of the event, the quality of the content was above my expectations.

I would like to touch on some of the topics covered at the conference.

Taxonomy & Ontology

There have been great advancements lately in the knowledge management industry in taxonomies. As stated in many presentations, applied taxonomies have become commonplace at enterprises and in many cases have progressed to more complex knowledge organization systems such as ontologies. According to Wikipedia, “an ontology encompasses a representation, formal naming, and definition of the categories, properties, and relations between the concepts, data, and entities that substantiate one, many, or all domains.”

Knowledge Graph & Graph DB

Probably every fourth presentation at KMWorld mentioned or presented a business case using a knowledge graph term. Sometimes this has been referred to as an enterprise knowledge graph or EKG—not to be confused with the abbreviation for electrocardiogram—which reflects the industry enthusiasm for knowledge graphs.

In recent years, knowledge graphs have become more accessible to enterprises through advances in technology, specifically in implementing graphs more easily in graph databases, which are now capable of federating different content sources under one roof, whether behind a firewall or in the public domain. It would be appropriate to mention that Norconex has recently made available its new open-source crawlers for Neo4j—one of the larger names in the field of graph databases. Here you can find an example of Norconex’s crawlers being used to import wine varietal data from the web into a Neo4j graph database.

Semantic Search & ML

Ontologies implemented as knowledge graphs are key enabling technologies behind semantic search. Introduced by Google and currently getting increasing traction at enterprises, semantic search is a search method that infers user intent from context and content to generate and rank search results. A semantic search–capable system provides results that are relevant to the search phrase. The context of the searched words, combined with the content and context of the user browsing history and user profile, helps the search engine decide the result that best satisfies the query. I like an example illustrating the semantic search that popped up during one of the panel discussions. Two seemingly very close terms—“black dress” and “black dress shoes”—produce totally different results when searched on Google. This task is not easily achievable with a regular keyword-based search technique.

The recent advances in machine learning have considerably improved the abilities of algorithms to analyze text and other types of unstructured content. Creative use of advanced machine learning techniques has proven effective for supplementing semantic search. There were a few interesting presentations at KMWorld covering this topic to a great extent.

____________

Overall, KMWorld Connect 2020 hosted a great deal of case studies, interesting discussions, and amazing insights that included introduction of new resources, sharing of tools and strategies, learning from colleagues, and much more.

Norconex looks forward to participating in the event next year.

The next presenter, Michael Basnight, Software Engineer at Elastic, provided an Elastic Stack roadmap with demos of the latest and upcoming features. Kibana has added new capabilities to become much more than just the main user interface of Elastic Stack, with infrastructure and logs user interface. He introduced Fleet, which provides centralized config deployment, Beats monitoring, and upgrade management. Frozen indices allows for more index storage by having indices available and not taking up HEAP memory space until the indices are requested. Also, he provided highlights on Advanced Machine Learning analytics for outlier detection, supervised model training for regression and classification, and ingest prediction processor. Elasticsearch performance has increased by employing Weak AND (also called “WAND”), providing improvements as high as 3,700% to term search and improving other query types between 28% and 292%.

The next presenter, Michael Basnight, Software Engineer at Elastic, provided an Elastic Stack roadmap with demos of the latest and upcoming features. Kibana has added new capabilities to become much more than just the main user interface of Elastic Stack, with infrastructure and logs user interface. He introduced Fleet, which provides centralized config deployment, Beats monitoring, and upgrade management. Frozen indices allows for more index storage by having indices available and not taking up HEAP memory space until the indices are requested. Also, he provided highlights on Advanced Machine Learning analytics for outlier detection, supervised model training for regression and classification, and ingest prediction processor. Elasticsearch performance has increased by employing Weak AND (also called “WAND”), providing improvements as high as 3,700% to term search and improving other query types between 28% and 292%.

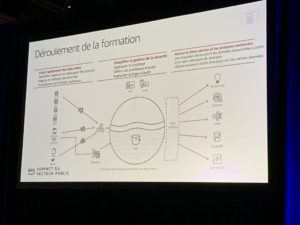

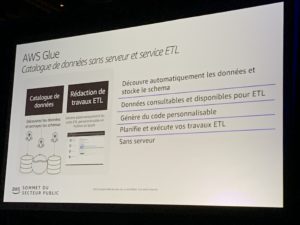

In the keynote sessions, it was great to hear Alex Benay (deputy minister at the Treasury Board of Canada) talk about the government’s modern digital initiative. He discussed the approach, successes, and challenges of the government’s Cloud migration journey. Another excellent speaker was Mohamed Frendi (director of IT, innovation, science, and economic development for the government of Canada). He covered Canada’s API Store and how it uses the Cloud to make government data more accessible.

In the keynote sessions, it was great to hear Alex Benay (deputy minister at the Treasury Board of Canada) talk about the government’s modern digital initiative. He discussed the approach, successes, and challenges of the government’s Cloud migration journey. Another excellent speaker was Mohamed Frendi (director of IT, innovation, science, and economic development for the government of Canada). He covered Canada’s API Store and how it uses the Cloud to make government data more accessible.